The biggest barrier in visual storytelling is not always a lack of ideas. More often, it is the gap between a good still image and a format that feels alive enough for social feeds, product pages, or short-form video. That is where Image to Video AI becomes interesting. Instead of asking users to learn a traditional editing workflow, it frames the task in a simpler way: upload a photo, describe what should happen, wait for processing, and export the result. That shift matters because many creators do not need a full studio. They need a fast way to turn a static visual into motion with less friction.

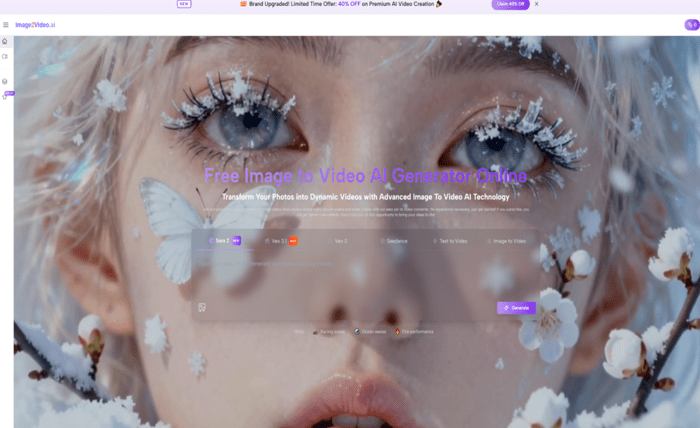

What stands out on the site is not a highly technical promise but a very direct user path. The platform presents image-to-video generation alongside related options such as text to video, text to image, image to image, and several effect-driven formats. In practical terms, that suggests a product built for experimentation rather than a narrow one-purpose utility. For someone making ads, social posts, creator clips, or personal montage videos, that broader environment can make the first test feel more approachable.

Why Motion Changes How Images Get Interpreted

A still image communicates a moment. A moving image communicates direction, emotion, and expectation. That difference is why many product photos, portraits, and scenic compositions feel stronger once subtle motion is introduced.

Why Static Photos Often Need Extra Context

A single image can be beautiful and still feel incomplete when used in modern content channels. Social platforms reward movement. Product presentations benefit from pacing. Personal memory pieces often feel more emotional when there is progression, not just display.

Why Small Motion Can Feel More Cinematic

In my reading of the platform, the appeal is not only animation itself but controlled perception. Camera movement, transitions, and visual effects can make an ordinary photo feel staged with more intention. Even when the source material is simple, motion changes how viewers read depth and focus.

How Easier Tools Lower Creative Resistance

A lot of people avoid video work because the workflow feels heavy. A browser-based generator changes that expectation. When the process is reduced to a few actions, experimentation becomes much easier than committing to a full edit timeline.

Why Simplicity Often Improves First Results

Simple workflows do not guarantee better output, but they often increase the chance that users will actually test multiple ideas. That matters because image-to-video quality usually improves when people are willing to refine prompts and retry.

How The Platform Organizes Its Creative Logic

The homepage presents the tool as a photo-to-video generator built around prompt-driven transformation. It also places this experience beside multiple model names and adjacent creative modes, which gives the product a wider “AI video workspace” feel.

What The Main Interface Suggests About Intent

The structure signals that the platform wants users to begin quickly. There is no emphasis on installing software, building timelines, or learning dense menus before generating. The practical message is clear: start from an image and let the system translate intention into motion.

Why Model Variety Changes User Expectations

The site references options such as Sora 2, Veo 3.1, Veo 3, and Seedance. Even without turning that into a technical comparison, their presence affects perception. It tells users that the product is positioning itself around modern AI video generation rather than a basic slideshow maker.

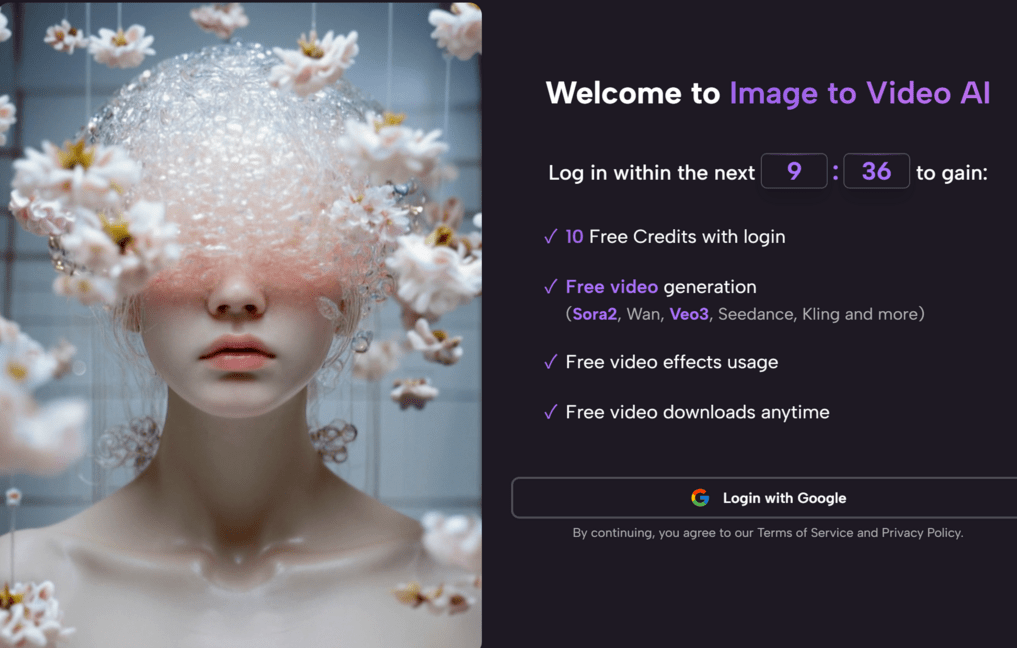

How Effect Pages Expand The Use Cases

The effects section introduces ready-made directions such as kiss, dance, fight, hug, twerk, old photo animation, and muscle transformation. This broadens the audience. Some people want cinematic movement. Others want fast, playful outputs that are easier to publish and share.

How The Official Workflow Actually Works

The most useful part of the site is that the generation process is clearly laid out. It is not hidden behind vague marketing language. The steps are simple and easy to understand.

Step One: Upload The Source Picture

The platform asks the user to choose and upload a picture. The page indicates support for JPEG and PNG formats. This matters because the barrier to entry remains low. Most users already have compatible files ready to test.

Step Two: Describe The Intended Motion

After upload, the next task is to enter a prompt text description. This is where the creative instruction happens. The user explains what kind of movement, mood, or transformation they want to see.

Step Three: Wait For Processing To Finish

The site says the system processes the request and usually takes around five minutes. That is short enough to feel accessible, though it also suggests users should expect some waiting rather than instant real-time editing.

Step Four: Check The Result And Share It

Once the status is completed, the generated video can be reviewed, downloaded, and shared. From a workflow perspective, that is a full browser-based loop: input, instruction, processing, output.

Where The Product Feels Most Practical

A tool like this becomes easier to judge when seen through actual usage scenarios rather than abstract feature lists.

For Product Promotion And Store Visuals

Static product photos often feel limited when placed in ad creatives. Motion can give shape, rhythm, and perceived detail to a listing. Even a light camera move can make the product feel more present.

For Social Content And Short Clips

Creators often need more volume than polish. A photo transformed into a short video can become a teaser, filler asset, or visual variation for a posting schedule.

For Memory Videos And Emotional Montage Work

Family pictures, travel photos, or older portraits often benefit from slow motion effects and music-oriented presentation. In that kind of context, emotional pacing matters more than technical complexity.

For Educational And Presentation Content

Diagrams, visual examples, and slide-based assets can become easier to follow when motion guides the eye. The value here is not spectacle but clarity.

What The Platform Highlights Most Clearly

The site does not present itself as a traditional editor. It emphasizes speed, accessibility, and breadth of use.

| Feature Area | What The Site Emphasizes | Why It Matters |

| Input Method | Upload image and add text prompt | Keeps the workflow simple |

| Access Style | Browser-based use | No software setup required |

| Processing Flow | Generate, wait, export | Easy to understand for beginners |

| Output Goal | Dynamic video from still images | Useful for content reuse |

| Extra Modes | Text to video and other tools | Expands beyond one narrow task |

| Effect Options | Multiple themed generators | Helps casual users start faster |

| Camera Control | Pan, zoom, tilt, rotation | Adds more cinematic direction |

| Subscription Angle | Better effects for subscribers | Suggests quality tiers matter |

What Feels Promising And What Feels Limited

The platform is easy to understand, but image-to-video generation is never fully automatic in the artistic sense. The user still shapes the result through source image choice and prompt quality.

What Looks Promising In Everyday Use

In my view, the strongest part of the platform is the low-friction workflow. It does not ask people to become editors first. It asks them to think visually and then test quickly. That is useful for creators who need more output without building a heavy production process.

What Probably Requires Patience

Good results likely depend on the quality of the original image and the clarity of the prompt. Some generations may need multiple attempts. That is common with AI video tools and should be treated as part of the process, not as a surprise.

Why Simplicity Does Not Remove Creative Judgment

Even with a streamlined tool, users still need to decide what kind of motion makes sense. Too much movement can feel artificial. Too little can make the result look like a slightly animated still. The better approach is usually intentional moderation.

Why This Type Of Tool Fits A Broader Shift

What this platform represents is larger than one website. It reflects a broader move from complex production software toward prompt-guided media creation that non-specialists can use.